2026 Great Lakes Data, AI & Analytics Summit

The Great Lakes Data, AI & Analytics Summit offers a unique one-day experience for professionals in analytics, IT, and business. Featuring keynotes from industry experts, leadership discussion panels, in-depth case study sessions from local practitioners, software demonstrations from top vendors, and plenty of networking opportunities. Attendees will learn about the latest analytics software, best practices, and success stories to help them capitalize on data and analytics strategy, data governance, and extracting business value out of your data assets.

As the ONLY event of its kind for data and analytics professionals in Michigan, it offers an unmatched opportunity for learning and professional growth. You won’t want to miss out.

Thank you for attending!

THURSDAY, APRIL 9, 2026 | 8:00AM - 5:00PM

TROY MARRIOTT | 200 W. BIG BEAVER RD, TROY, MI

registration Fee: $249

Subscribe to our email list to learn more about the event and get updates on registration, speakers, sponsors, and sessions or check out past content in our Summit Resource Library.

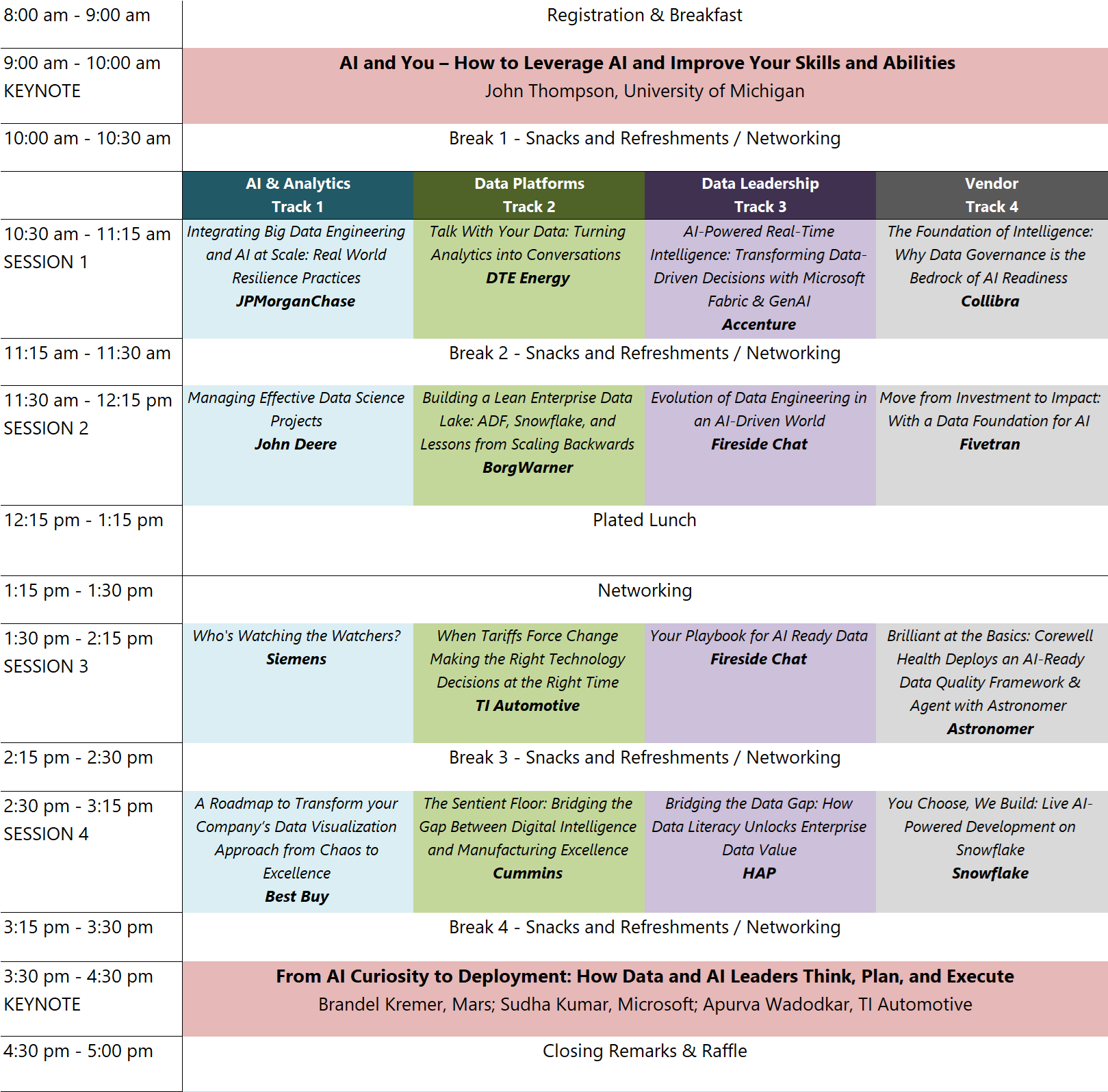

Agenda

**Breakout session and panel discussion details will be added as they are confirmed

Keynotes

AI is everywhere. GenAI seems to have taken over the minds of many managers, executives, investors, and business leaders. But in reality, there is a significant amount of confusion of where AI can help us in our daily lives and work and where AI can be leveraged to make work better, faster, and easier. In this session we will talk about AI for personal productivity, AI for team improvement, and AI for strategic advantage. We will talk about Predictive AI, Causal AI, and, of course, Generative AI. Bring your questions, we will engage in a lively dialog about where the world of work really stands in relation to the advance of AI.

Presented by: John Thompson, University of Michigan

John is an international technology executive with over 38 years of experience in the fields of data, advanced analytics, and artificial intelligence (AI). John is the Vice President, Product Analytics at CCC Intelligent Solutions. John’s responsibilities include designing, developing, and deploying innovative ways to integrate data, deliver insights, and to apply Predictive AI, Generative AI and Causal AI to CCC products and services.

John is a Lecturer at the University of Michigan in the School of Information (UMSI) and Ross Business School where he teaches classes based on his books and on the subjects of AI, and Building and Managing Analytics teams. He was recently named an Innovation Fellow for the Center of Academic Innovation.

Previously, John was the global head of AI at EY. His role was to actively lead the design, development, implementation, and use of innovative AI solutions, including Generative AI, Traditional/Classical AI, and Causal AI, across all of EY Service Lines and functions and for EY's clients. The team is comprised of - an Applied AI Research group, a product development organization, and an AI Consulting Practice.

How do leading organizations move from experimentation to measurable results? In this closing conversation, leaders from global distribution, advanced manufacturing, and software share what it really takes to discover, prioritize, and scale AI use cases in the real world.

In this closing keynote panel, expect an honest discussion about what’s working, what’s harder than expected, and what separates AI ambition from AI impact.

Keynote Panelist: Brandel Kremer, Mars

Brandel Kremer is Director of Global Data Operations & Governance at Mars, where she leads AI-enabled data operations and enterprise master data governance. She helps teams reduce risk while improving operational efficiency by translating AI strategy into secure, controlled, and scalable execution. Her work focuses on the guardrails that make data and AI trustworthy—risk-based decisioning, accountable model operations, robust quality controls, and audit-ready governance that protects customers and the business. Brandel has led complex, cross-functional transformations and built operating models that deliver consistent outcomes without sacrificing reliability or compliance. She holds an MBA and multiple technical certifications spanning cloud technologies and security, and has completed the ISACA Advanced AI Audit course as well as earning a post-graduate certificate in Advanced AI & Generative AI from Purdue University.

Keynote Panelist: Sudha Kumar, Microsoft

Sudha Kumar serves as a Strategic Account Technology Strategist at Microsoft, where she leads AI strategy, cloud modernization, and enterprise transformation for a major Financial Services and Insurance organization. With more than 25 years of experience spanning AI Transformation, analytics architecture, SAP and operations modernization, and global delivery leadership, Sudha helps organizations adopt Microsoft products and solutions like M365 Copilot, Microsoft Foundry, and the broader Microsoft Cloud to improve productivity and operational excellence. She is recognized for her ability to align technology with business priorities, guide responsible AI adoption, and drive measurable outcomes through data‑driven decision‑making. Sudha is a trusted advisor to executives, known for her strong communication, stakeholder engagement, and practical approach to large‑scale transformation.

Keynote Panelist: Apurva Wadodkar, TI Automotive

Apurva Wadodkar is Senior Director and Head of Data & AI at TI Automotive, leading enterprise data, AI, Master data management, Enterprise Integration, automation, and custom applications. She brings over 20 years of experience positioning data as a strategic asset in executive decision making.

She is widely recognized for building and scaling high performing teams with a strong innovation mindset, empowering them to experiment and operationalize ideas at scale.

Apurva is also a distinguished thought leader and writer, with her articles published in respected outlets including CIO.com, Forbes Technology Council, and CDO Magazine, where she shares perspectives on data, analytics, and AI leadership.

Breakout sessions

In today’s business environment, making smart, data-driven decisions in real time is essential for maintaining efficiency and quality in business operations. Join us to explore Microsoft Fabric Real-Time Intelligence and discover how to seamlessly ingest, analyze, and act on real-time data.

Learn how to:

1. Ingest real-time data from multiple sources into Fabric

2. Run lightning-fast queries for instant insights

3. Monitor key metrics with intuitive, real-time dashboards

4. Set up automated alerts and detect anomalies for proactive issue resolution

5. Leverage Copilot in Real-Time Intelligence to query data using natural language

Presented by: Mou Rakshit, Accenture

A seasoned data and analytics leader with 25+ years of enterprise experience, Mou Rakshit is a trusted architect of modern data platforms and scalable governance frameworks. As Group Manager of Data, AI & Analytics Engineering at Accenture/Avanade, she leads the design and delivery of lakehouse solutions that transform how organizations leverage their data for competitive advantage.

Her hands-on expertise spans Python, SQL, Tableau, and cloud platforms including Databricks, Azure, and AWS. Recognized as a Databricks Certified Data Engineer and CBIP Professional, Mou has delivered over 4,500 hours of professional enablement, speaking at conferences on modern data platforms and AI readiness. As an Adjunct Professor in Business Analytics at Trine University, she equips emerging leaders with real-world expertise.

Mou's mission: to help organizations unlock their data's full potential while building high-performing teams that drive measurable business impact.

Organizations invest millions of dollars in their data infrastructure with the goal of enabling data‑driven decision making, yet many fail to maximize the full value of those investments. The presentation argues that comprehensive data literacy initiatives are the missing link between well-funded data infrastructure and the employees expected to harness them. Data consumers within the business frequently lack the fundamental, tool‑specific, and domain‑specific skills needed to interpret, question, and apply data effectively. By establishing comprehensive data literacy programs, businesses can bridge this gap and empower employees at all levels to become confident, competent data users. The session will demonstrate how improved data literacy enhances enterprise value, drives innovation, strengthens customer service, and increases overall employee effectiveness. Attendees will also gain an understanding, along with a practical framework, for how to design a data literacy initiative that unlocks the full potential of their robust data infrastructure.

Presented by: Josh Kahl, Health Alliance Plan

Josh is a data literacy leader at Health Alliance Plan who specializes in helping organizations unlock the full value of their data investments by empowering employees with the skills and confidence to use data effectively. He designs and scales enterprise-wide data and AI literacy initiatives that have upskilled hundreds of employees, strengthened business impacts, and delivered measurable financial impacts through foundational, tool-specific, and domain specific programming. Josh blends strategic leadership, technical expertise across the Microsoft data stack, and tactical know-how to enable teams to deliver true data-driven results. Josh brings a practical, people‑centered approach to bridging the gap between robust data infrastructure and the workforce that uses it.

Help! I’m drowning in dashboards!

Most companies are drowning in information. Tools like PowerBI or Tableau promote self-service dashboards, and this sounds so tempting to overworked analysts. Sadly, then reality hits. We are flooded with resource-hogging, poorly designed dashboards that contradict, often break, and have poor performance. Yet no one is willing to shut things down and centralize everything. After all, who has the time and resources to build every metrics request?

This interactive session will take you through a step-by-step journey to clearly define and promote excellence in data visualization. Based on best practices from Fortune 500 companies we will show you a way to balance centralization and standardization, while still allowing self-service. Sound too good to be true? Come and hear our story of transforming our chaotic world of dashboards at Best Buy.

Presented by: Jeff Nieman, Best Buy

Jeff Nieman is currently the Senior Director of Data Strategy and Visualization at Best Buy, where he leads a global organization focused on data governance, data analytics and data reporting/visualization for Best Buy. Jeff’s passion is for everyone to have the ability to tell and understand their story through data, and previously he has led data science teams at Cisco, Ford, and McDonald’s. Jeff has an undergraduate degree in engineering from the University of Michigan (Go Blue!), and a Master’s Degree in Data Science from CUNY, where he also serves as adjunct faculty in their graduate data science program. Jeff is part of the IIA Analytics Expert Network, is a frequent presenter at conferences and events, and in 2025 he was named one of the Top Data Science Transformers as a recipient of the prestigious AI Top 100 award.

This session explores how to effectively evaluate Large Language Models (LLMs) in real-world production systems. As organizations increasingly rely on LLMs for applications like search, recommendations, and conversational AI, traditional evaluation approaches fall short. Through practical insights and industry examples, this talk covers why LLM evaluation is fundamentally different, how to design meaningful metrics aligned with business goals, and how to build scalable, continuous evaluation pipelines.

Attendees will learn about key concepts such as end-to-end evaluation, embedding and RAG evaluation, LLM-as-a-judge frameworks, and performance monitoring in production environments. The session emphasizes an evaluation-driven development mindset—ensuring that LLM systems are reliable, measurable, and continuously improving in real-world use cases.

Presented by: Supriya Bachal, Siemens

Supriya Bachal is an AI/ML Engineer specializing in large language models, data pipelines, and production-scale AI systems. She currently works with Siemens Digital Industries, where she focuses on building scalable AI solutions, including ingestion frameworks, validation systems, and LLM-powered applications across technical documentation and enterprise platforms. With a strong foundation in machine learning and MLOps, Supriya has led initiatives involving cloud-native architectures on AWS and Azure, embedding-based semantic systems, and real-time monitoring for AI pipelines. She is also the creator of llm-metrics-lite, a lightweight toolkit for evaluating LLM outputs. Supriya is passionate about bridging research and industry, with ongoing work in federated learning, LLMOps, and audience segmentation using large language models. She has been recognized as a STEM mentor and actively contributes to the AI community through research, speaking, and mentoring initiatives. She was a finalist for the woman in AI award 2025.

This session presents a technical case study of designing, implementing, and scaling an enterprise data lake at an S&P 500 company using Azure Data Factory, Snowflake, and Power BI—delivered by a lean, three-person internal data engineering team.

Rather than leading with heavy governance and upfront optimization, we prioritized ingestion reliability, scalable storage, and analytics-ready data models to support immediate business needs. As platform usage increased, technical constraints surfaced around access control, auditability, cost management, and operational consistency. These challenges drove the introduction of role-based access control, standardized ingestion patterns, Snowflake cost optimization techniques, and supporting documentation.

The session focuses on architectural decisions, sequencing trade-offs, and operational lessons learned, offering practical guidance for engineers and architects building data platforms under real enterprise constraints.

Presented by: Ioana Gonçalves, BorgWarner

Ioana Gonçalves is a Data Engineering Manager who led the creation and evolution of an enterprise‑scale data lake at an S&P 500 company using Snowflake, Azure Data Factory, and Power BI. She specializes in turning ambiguity into structure, building lean and effective data engineering practices, and enabling organizations to make better decisions through scalable, reliable data platforms.

She brings extensive international experience in data warehousing for the banking and automotive industries, along with deep expertise in data modeling gained through work in Europe with Oracle and other software companies.

In today’s data-driven world, the fusion of Big Data Engineering and Artificial Intelligence is revolutionizing how organizations unlock value from their information assets. This session explores the foundational pillars of Big Data—Volume, Velocity, Variety, Veracity, and Value—and demonstrates how robust data ingestion, scalable storage, and advanced processing architectures form the backbone of trustworthy AI systems. Attendees will discover how high-quality data pipelines fuel training, feature stores, and real-time inference, while governance, observability, and security ensure reliability and compliance at scale. The talk will highlight strategies for engineering trust, including metadata management, lineage tracking, and cost-aware AI, empowering organizations to build resilient, explainable, and future-ready solutions. Join us to learn how integrating immutable data modeling and shared feature logic can drive innovation, reproducibility, and point-in-time correctness—transforming Big Data from a technical challenge into a strategic advantage for AI excellence.

Presented by: Amit Meshram, JPMorganChase

Amit Meshram is an Executive Director and Principal Software Engineer with over 20 years of experience building and governing large-scale, mission-critical systems at enterprise scale. He specializes in big data platforms, low latency design, AI-driven systems, cloud and distributed architectures, and resiliency engineering that power hundreds of millions of transactions daily. A recognized technology leader and inventor with multiple patents, Amit bridges deep engineering rigor with real-world business impact, helping organizations design systems that are not just scalable—but trustworthy, resilient, and future-ready.

What if getting insights from your data felt less like running reports and more like asking a question? In this session, we explore how conversational analytics, natural-language interfaces, and AI copilots are transforming the way organizations interact with data. Learn how “talking with your data” accelerates decision-making, empowers non-technical teams, and unlocks faster, more intuitive insights across the business. We’ll cover the foundational concepts of How Databricks Genie works with a real-world use case, and what leaders should consider when bringing conversational data experiences into their organization.

Presented by: Uma Poduturu, DTE Energy

Uma is a strong technology and data leader specializing in digital transformation, IT strategy, and modern platform delivery. Uma excels at aligning technology investments with business goals to drive growth, efficiency, and measurable outcomes. With a strong track record in system modernization, AI adoption, and team leadership, Uma builds high-performing organizations that deliver secure, scalable solutions. Uma is passionate about turning strategy into execution through data, automation, and cross-functional collaboration to enhance decision-making, customer experience, and operational performance.

Generative AI has changed how we think, but Physical AI is changing how we work. This session explores the critical shift from digital "thinking" to Operative Intelligence—where AI moves out of the screen and into the physical world. Using the manufacturing floor as our anchor, we will examine how Physical AI serves as a "digital nervous system" for industry. Drawing on expertise in Prognostics and HSE, I will demonstrate how machines are evolving to perceive their environment, predict their own maintenance needs, and create autonomous "safety bubbles" for the human workforce.

While the foundation is the factory, the lessons of "Safe Doing" are universal. We will discuss how manufacturing-born innovations—like real-time failure prevention and adaptive safety—are setting the standard for excellence across Healthcare, Telecom, and Logistics.

Presented by: Aashika Varadharajan, Cummins

Aashika leads global teams in leveraging advanced analytics and machine learning to drive operational transformation across manufacturing and service domains. She specializes in predictive modeling, anomaly detection, and MLOps, delivering measurable impact including 15% reduction in manufacturing downtime and 26% decrease in HSE incidents.

She builds scalable data pipelines using PySpark and Azure Databricks, deploying production ML models with MLflow and CI/CD frameworks. Her technical expertise spans deep learning architectures, statistical modeling, and real-time analytics across cloud platforms. Passionate about innovation at the intersection of AI and operational excellence, Aashika empowers teams to deliver transformative solutions that optimize safety, efficiency, and performance.

Tariffs introduced sudden uncertainty, regulatory complexity, and significant data demands across the business. What had been a stable and cost-effective data environment was no longer able to keep pace. A small team was required to respond quickly, process large volumes of data, and adapt to constant rule changes while maintaining accuracy and confidence in the numbers.

This session shares how tariff pressure became the catalyst for moving to cloud-based data solutions. It explains what drove the shift, how decisions were made under tight timelines, and how the organization balanced speed, risk, and long-term sustainability. Rather than focusing on technology trends, the discussion centers on business drivers, tradeoffs, and outcomes.

Presented by: Tim Dieltjens, TI Automotive

Tim Dieltjens is a Data Architect at TI Automotive with extensive experience supporting data driven decision making in a global manufacturing organization. He has led enterprise reporting and data platform initiatives that enabled regulatory compliance, improved financial visibility, and delivered measurable cost savings. Tim has worked closely with business and technology leaders to modernize data capabilities while managing risk, technical debt, and limited resources. His approach emphasizes practical decision making, aligning technology investments with business priorities, and adopting new platforms only when they create clear and lasting value.

A tremendous amount of work and investment goes into collecting data and making it usable for others. It's imperative that we use it to provide clear business value. Despite your best efforts, you may notice that your dashboards, insights, and predictions are under-utilized - even though you provided exactly what the end-user asked for. Often this is because our end-users aren't great at defining requirements. Sometimes we don't ask the right questions. Sometimes we're not focused on the right things.

Join this session for an engaging discussion on how to set up your data science team for success by:

- Front loading your process with focused valuable work.

- Managing data science projects by setting clear goals and requirements (and identifying the requirements they didn't tell you about).

- Ensuring your data product is achieving the intended value.

Presented by: Joshua Neymeiyer, John Deere

Josh is a Data and Analytics Catalyst and machine health leader at John Deere with experience in predictive analytics, process development, and cross-functional innovation. He is passionate about connecting customer challenges with analytics solutions. Josh holds a B.S. in Heavy Equipment Service Engineering Technology from Ferris State University and has earned multiple enterprise awards for innovation and contributions to connected support solutions.

panel sessions

This panel cuts through the hype behind “AI-ready data” to define what it truly means in practice—and why it’s the foundation for scalable, high-impact AI. Data leaders will share practical perspectives on aligning data quality, governance, and architecture with real business needs. The conversation will focus on what helps organizations move beyond pilots and make AI work in day-to-day operations. Attendees will leave with a clearer, more grounded view of what “AI-ready data” actually looks like.

Session Panelist: Harini Rajagopal, AAA Life Insurance

Harini Rajagopal is a champion and advocate for Data Engineering with deep expertise in building scalable, resilient data platforms that ingest and process terabytes of data. With a background spanning software development, data management, and strategic execution, she focuses on designing robust pipelines and growing high-impact data teams. Harini is an active speaker and panelist at industry conferences including Data in the D and the Great Lakes Data, AI & Analytics Summit, and has shared her insights through podcasts, technical writing, and infrastructure-as-code advocacy. She holds multiple AWS certifications, a Strategy Execution certificate from Harvard Business School Online, and is a peer reviewer for technical publications and research forums. Harini is also a dedicated mentor and community contributor, supporting the next generation of data engineers and leaders.

Session Panelist: Kalpana Yendluri, Great Lakes Water Authority

Kalpana Yendluri is a proven technology executive with a 30-year career specializing in enterprise data platforms, governance, and driving business transformation through data and AI. She has a strong record of architecting scalable, secure, and cost-optimized data solutions for large financial and commercial organizations.

Kalpana has spearheaded major initiatives, including leading the data platform transformation for BMO's acquisition of Bank of the West and driving AI adoption at the Great Lakes Water Authority (GLWA). Her expertise in aligning technology with business objectives has consistently delivered measurable value and operational excellence.

Recognized as a Global Data Power Woman by CDO Magazine for three consecutive years (2022-2024), Kalpana is a respected leader and a 2025 Hall of Fame inductee at Wayne State University, where she also earned her master’s degree in computer science.

Session Panelist/Moderator: Pete Cooney, Jackson National Life

Pete Cooney is an experienced technology professional with over 25 years’ experience in data, data integration, application integration, business architecture, process engineering and enterprise architecture. He is currently the Enterprise Platform Architect for Data/Data Architecture Manager as part of the Enterprise Architecture team at Jackson National Life, a leading provider of annuities and other insurance-related investment vehicles.

As AI rapidly reduces the effort required to write SQL and build data pipelines, the role of data engineering is evolving in real time. This panel explores how data engineers are shifting from code producers to system designers, with greater focus on architecture, orchestration, governance, and reliability. Data leaders will share how AI is reshaping workflows, decision-making, and productivity across teams. Attendees will gain a clearer view of how the data engineering function is changing and where to focus as AI becomes embedded across the data stack.

Session Panelist: Eric Robinson, Mercury Insurance

Eric Robinson is a senior analytics leader with deep experience building data platforms and governance practices that enable consistent, trustworthy analytics at scale. As Senior Manager of Analytics Engineering at Mercury Insurance, Eric leads enterprise initiatives spanning analytics engineering, data governance, and semantic enablement—ensuring data is not only accessible, but clearly defined, well-governed, and aligned to business meaning. His work emphasizes shared metrics, governed semantic layers, and ownership models that help analytics teams scale with confidence.

Previously, Eric served as Director of Data Management and Analytics at AAA Life Insurance, where he built cross-functional teams and advanced data quality, reporting, and analytics maturity. Known for translating governance, ontology, and semantic consistency into practical, operational frameworks, Eric brings a practitioner’s perspective grounded in real-world delivery and is passionate about developing the next generation of analytics leaders.

Session Panelist/Moderator: Mark Fisher, Mission Veterinary Partners

Mark Fisher is the Director of Analytics for Mission Veterinary Partners, a Michigan-based veterinary services organization providing care for millions of pets across the country. In December 2024, Southern Veterinary Partners (SVP) and Mission Veterinary Partners (MVP) joined to create one company, with a shared mission to take the very best care of its team members and to provide exceptional care to clients and patients.

Mark is a Digital Transformation and Analytics leader with repeated success in multiple Fortune 500 environments and a specialized focus in analytics enablement across 1000+ user bases. He has over 12 years of success at two “Big 4” Firms in leadership roles serving Fortune 100 clients. Mark has extensive experience in multiple industries including Health Care, Automotive, Utilities, Retail, and Media & Entertainment.

Sponsored Sessions

What happens when the audience takes the wheel? Let’s find out! Through interactive polling, attendees will shape the demo in real-time—selecting data themes, choosing analytics features, and guiding application design. Using Cortex Code, Snowflake's AI development assistant, we'll demonstrate rapid development leveraging Streamlit for visualization, Cortex Analyst for natural language SQL, and built-in ML functions for predictive insights. No pre-baked demos—just live development driven by your choices. This "choose your own adventure" format showcases how Snowflake's unified AI platform enables teams to move from concept to production-ready applications in minutes, not months.

Presented by: Christopher Smith, Snowflake

Chris Smith is a Senior Solution Engineer at Snowflake where he is a thought leader for data and AI, crafting solutions on Snowflake for all the largest Automotive OEMs in the world. Prior to Snowflake, Chris built an extensive background in the automotive industry, working directly within the IT and analytics space at companies like GM and Stellantis for 15 years. He has a Bachelor of Science in Computer Science & Mathematics and an MBA focused on Management Information Systems, which allow Chris to bring a unique blend of technical expertise, business acumen, and practical industry knowledge to his customers. Beyond his professional pursuits, Chris is an avid DIY enthusiast, tackling home renovation projects and working on cars in his spare time. He finds balance in his personal life by dedicating quality time to his wife and four young children.

AI is only as smart as the data underneath it, and many data platforms lack the systems to support it. This session explores how Astro, Astronomer's managed orchestration platform for Apache Airflow, becomes the backbone of a modern data quality system, connecting SODA DQ Framework checks, Snowflake transformations with dbt, and a Langchain-powered agent that automatically contextualizes failures and surfaces root cause analysis. The result highlights how deterministic systems are the foundation intelligence depends on to scale.

Presented by: Aaron Tellis, Corewell Health

Aaron Tellis is a Senior Data Engineer and AI Lead for the Development Center of Excellence at Corewell Health, where he leads AI initiatives by researching, recommending, and equipping developers with AI tools that improve development and DevOps workflows. Outside of his day job, he writes about machine learning systems, AI tooling, and enterprise adoption.

Presented by: Chris George, Astronomer

Chris is an ex-founder and 2x startup leader with 13 years of experience building data-focused products and teams across data science, analytics, and research. His background includes building the data function from seed through a $40M+ growth round at a fintech platform, scaling a consultancy to become one of Forbes’ Best Data & Analytics firms, and serving on President Barack Obama’s HQ analytics team. With experience spanning from pre-PMF to 200+ employee growth stages, Chris offers a unique perspective on the 0-to-1 craft of data applications. He is passionate about building systems that use data for innovation—specifically creating growth models that accelerate startup trajectories and developing platforms to power intelligent applications.

As organizations race to transition from AI experimentation to enterprise-scale implementation, they are discovering a hard truth: your AI is only as reliable as the data fueling it. Without a robust governance strategy, AI initiatives face significant hurdles, from "hallucinations" caused by poor data quality to severe compliance and security risks.

In this session, Bobbi Caggianelli explores why Data Governance is no longer a back-office compliance function but the essential engine for AI readiness. We will dive into the technical and strategic pillars required to move beyond the hype and deliver trusted AI outcomes.

Key Pillars of AI Readiness:

Data Quality: The AI Fuel Standard: Understand why high-fidelity data is non-negotiable. We’ll discuss how automated quality checks and observability prevent "garbage in, garbage out" from undermining model performance.

The Semantic Layer & AI Agents: Learn how establishing a common business language allows autonomous agents to find, understand, and utilize the right data effectively.

Data Products & Access Control: Discover how treating data as a product ensures high-quality, reusable assets with built-in, automated access controls.

Governing the Unstructured: Explore strategies for managing the vast landscape of unstructured data (PDFs, docs, logs) that is now critical for feeding LLMs and RAG-based systems.

The AI Governance Framework: Understand the necessity of an overarching framework that bridges data governance, use case oversight, and model lifecycle management.

Attendees will leave with a blueprint for building a governed data ecosystem that balances rapid innovation with the trust and transparency required for the modern AI era.

Presented by: Bobbi Caggianelli, Collibra

Prior to joining Collibra, Bobbi Caggianelli spent four years building a data governance and quality practice from scratch at a multi-billion dollar life and annuity company. She joined Colliba after working with Collibra's toolset, in part to share her experience with others. Her goal is to help companies build a successful governance practice and show them how Collibra enables that maturation journey. Bobbi has an MS in Business Analytics and is a Master Black Belt in Six Sigma.

AI investment is accelerating rapidly, yet many organizations struggle to move AI from experimentation to production. The challenge isn’t ambition — it’s data. Fragmented systems, manual pipelines, inconsistent governance, and rising costs are widening the gap between AI expectations and operational reality.

The solution is building the right data foundation. By standardizing and automating data movement, management, and transformation, organizations reduce complexity, lower costs, and free their teams to focus on delivering insights and AI-driven outcomes.

In this session, you will discover:

Why AI investment is outpacing AI in production — and the core data challenges holding enterprises back

Why AI success starts with a modern, automated, and governed data foundation

How leading global organizations are unlocking measurable impact with a modern data foundation

Presented by: James Render, Fivetran

James Render is a Senior Sales Engineer at Fivetran. He has been in the data space for over 12 years, working in analytics, data management, and data integration. He has worked with enterprise organizations on data infrastructure and integration for 10 years. During his time at Fivetran, James has partnered to onboard various Fortune 100, 500 and G2K organizations, modernizing their data movement infrastructure for advanced analytics and generative AI initiatives.